What You Can Actually Do With a 16GB VRAM GPU and Local AI

If you have a 16GB VRAM GPU sitting in your workstation, you're closer to running your own AI infrastructure than you think. Here's what 353 real runs look like.

There’s a version of local AI that gets talked about online a lot. Massive server racks. Tens of thousands of dollars in hardware. Enterprise-grade everything.

That’s not what I’m talking about here.

This is about what happens when a solo builder with a decent workstation decides to stop paying per token and start running their own inference. Not to serve users. Not to build a startup. Just to automate personal work reliably, cheaply, and without sending everything to the cloud.

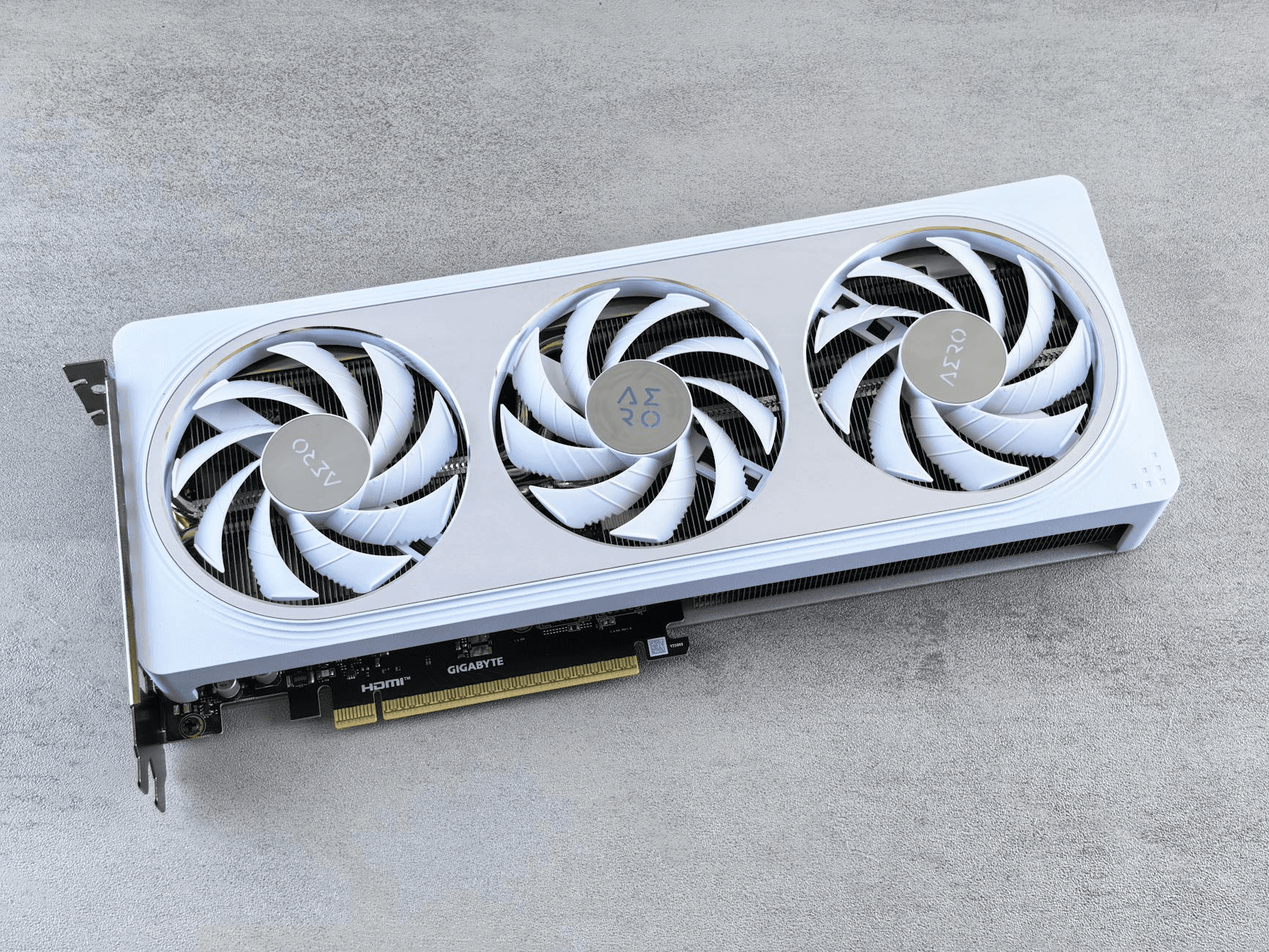

If you have a GPU with 16GB VRAM, like an RTX 5080, an RTX 4080, or anything in that class, you are much closer to running your own useful AI setup than most people think.

The setup

My workstation runs an RTX 5080 with 16GB VRAM, a Ryzen 9, and 32GB of system RAM. Ollama handles model serving. A Cloudflare tunnel makes it accessible remotely, so it is not just a local box. I can hit it from anywhere.

It was not plug-and-play. There was some configuration involved. But once it was running, it stayed running. No subscriptions. No rate limits. No API bill waiting at the end of the month.

That part matters more than the hardware bragging rights. The real question is not whether you can do this. You can. The question is whether it performs well enough to be useful.

353 runs later

After 353 inference runs across 30 days, three models settled into rotation.

The first was llama3:latest. It averaged 121 tok/s, with around 7 second response times. It used 4.5GB of VRAM, kept the GPU at about 94% utilisation, and sat around 43°C. Fast, reliable, light on resources. It is the model you reach for when you need something quick and consistent.

The second was qwen2.5:7b. This became the standout. It averaged 139 tok/s, with 9 second responses and 4.85GB of VRAM. Peak throughput hit 169 tok/s on a good run. Output quality was better than llama3 for anything involving writing or structured content, while staying just as fast. It became the default for production pipeline runs.

The third was qwen3:14b. This averaged 51 tok/s with a 99 second response time and 5.3GB of VRAM, while the GPU hit 61°C. It is slow by comparison, and it knows it. There is a built-in reasoning phase that thinks before it responds. That adds latency, but also quality on complex tasks. Not a model for quick jobs. Genuinely useful for harder ones.

A fourth model, Devstral:24b, Mistral’s purpose-built agentic model, ran 5 times and got cut. It averaged 36 tok/s, barely used the GPU at 84% utilisation, and could not reliably call tools. More on that in a separate article.

What 16GB VRAM actually means

The VRAM ceiling matters more than people expect.

The rule is simple: keep your model fully in VRAM. The moment layers spill into system RAM, you pay a severe performance penalty. Benchmarks on the RTX 5090, a 32GB card, show that running a 70B model with even partial RAM offload drops throughput to under 5 tokens per second. A card that should be fast becomes sluggish the moment the model does not fit.

With 16GB, you are working comfortably with 7B to 14B parameter models. That is the sweet spot. Small enough to fit entirely in VRAM, capable enough to produce genuinely useful output.

qwen2.5:7b at 139 tok/s is not a compromise. It is fast, sharp, and fits with room to spare at 4.85GB. Pushing to 32B models on 16GB is technically possible, but practically painful. The speed hit from partial offload usually makes a smaller, faster model the smarter choice.

What it is actually good for

Local inference at this scale is great for personal workflow automation where you control the volume.

Scheduled tasks. Content pipelines. Local scripts that need a language model in the loop. That is the zone where this setup works without drama.

The key distinction is that you are not building for users. You are building for yourself. That changes the math entirely. A 9 second response from qwen2.5:7b is fine when the job runs overnight. A 99 second response from qwen3:14b is fine when I am generating one thoughtful piece of content, not running a queue of fifty.

For anything requiring structured JSON output on critical pipeline steps, a frontier API is still more reliable. I would not pretend otherwise. But for the bulk of generation work, the volume tasks, the “run this fifty times” use cases, local inference handles it. The output quality is good enough to have been in production for a month without issues.

The honest starting point

16GB VRAM is enough. You do not need a 5090. You do not need dual cards.

For personal automation at solo-builder scale, a single mid-to-high range GPU running 7B to 14B models gets the job done.

The models have crossed a threshold. qwen2.5:7b running at 139 tok/s on a consumer GPU, fully local, zero API cost per run, that was not the reality two years ago. It is now.

If you have been sitting on the fence about whether local AI is worth exploring, my short answer is yes. Start with a 7B model, see what it can do for your workflow, and go from there.

The cloud API is not going anywhere. But you do not need it for everything anymore.